da/sec scientific talk on Biometrics

Topic: Zero-Leakage Biometric Cryptosystems in the Deep Learning Era: Feature Encoding and Error Correction Strategies

by Hans Geißner

D19/2.03a (also online via the corresponding BBB room), March 19, 2026 (Thursday), 12.00 noon

Keywords — Biometric Cryptosystems, Biometric Template Protection, Feature Type Transformation

Abstract

Biometric template protection (BTP) is a critical challenge in biometric recognition. Many popular schemes were designed before the shift from handcrafted to deep learning-based feature extraction and have since been adapted to work with these new feature vectors. For biometric cryptosystems (BCS)-a class of BTP schemes-these adaptations have mostly been simple feature transformations that map between feature spaces while preserving distance.

However, the advent of deep learning has introduced new security concerns: deep feature vectors retain enough information to allow reconstruction of synthetic biometric samples, making them vulnerable to inversion attacks. In BCS this is particularly critical, because upon a false match the protected secret is released. An attacker who obtains a false match needs only to reverse the feature transformation to access the original feature vector. This creates a unique requirement for extremely low false match rates (FMR) in BCS.

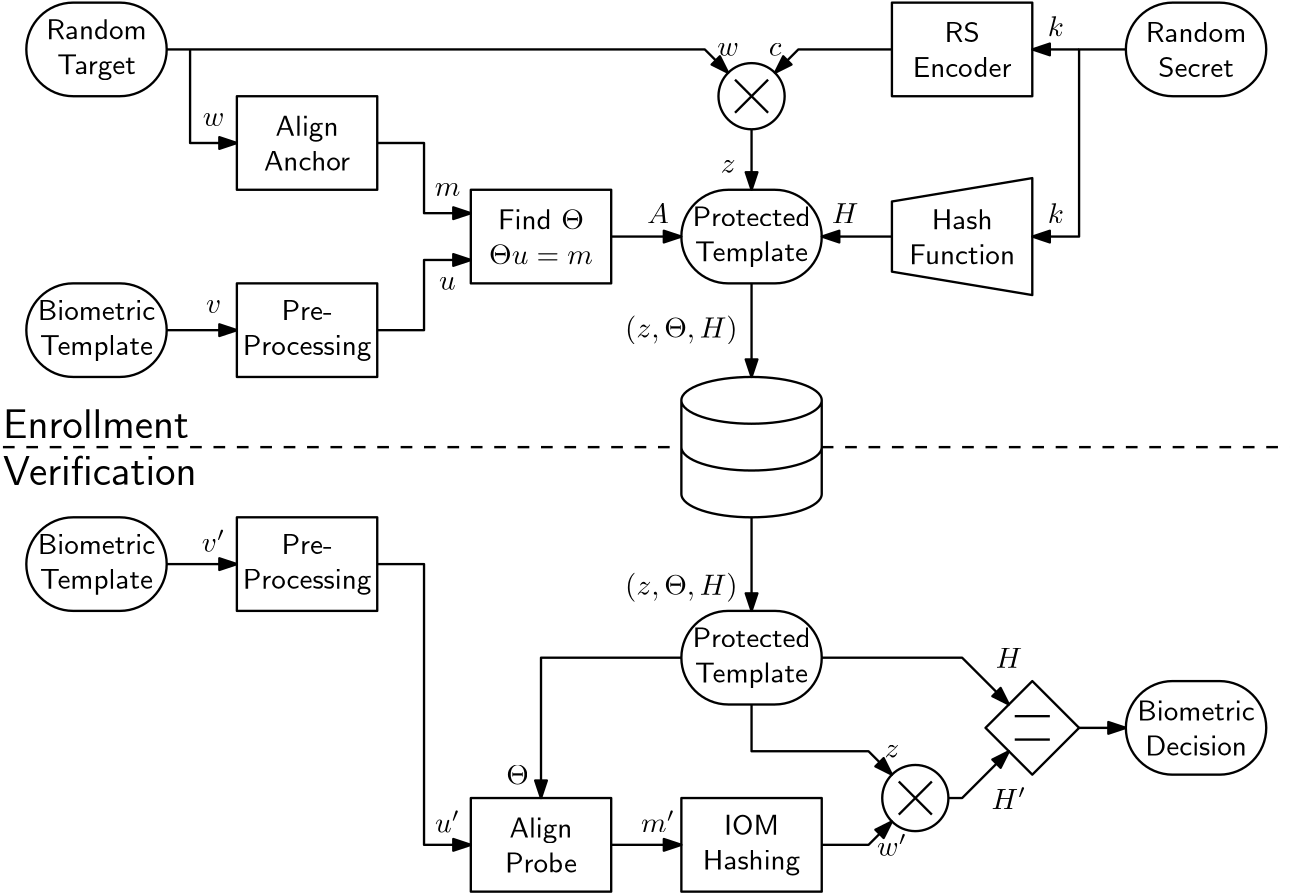

Achieving such low FMR in BTP schemes leads to another challenge: the protected template must retain enough information from the original data to enable these low FMR levels, while simultaneously leaking no information about the original biometric. BCS address this by binding the template to a randomized secret. During a comparison, the system attempts to recover this secret using a biometric probe and the protected template; correctness is verified via a stored hash of the secret. While this hard-decision approach is effective and mitigates score leakage, it introduces a new concern: leakage of information about the secret itself.

Finally, BTP must preserve not only recognition performance but also computational efficiency. In BCS, computational cost is dominated by the encoding and decoding of the secret, which is necessary to tolerate intra-class variability (noise).

In this work, we examine a state-of-the-art BCS that addresses many of these concerns and analyze the mechanisms it uses to mitigate each issue. Using its pipeline as a blueprint, we then walk through the design of a novel scheme that addresses additional concerns regarding the ECC. Switching from soft-decision Turbo codes to hard-decision Reed-Solomon codes combined with Index-of-Maximum (IOM) hashing, yields deterministic error correction, simpler implementation, and reduced decoding complexity. We aim to investigate wether this approach can achieve similar system performance and security guarantees as the original scheme, while reducing overall transaction complexity.